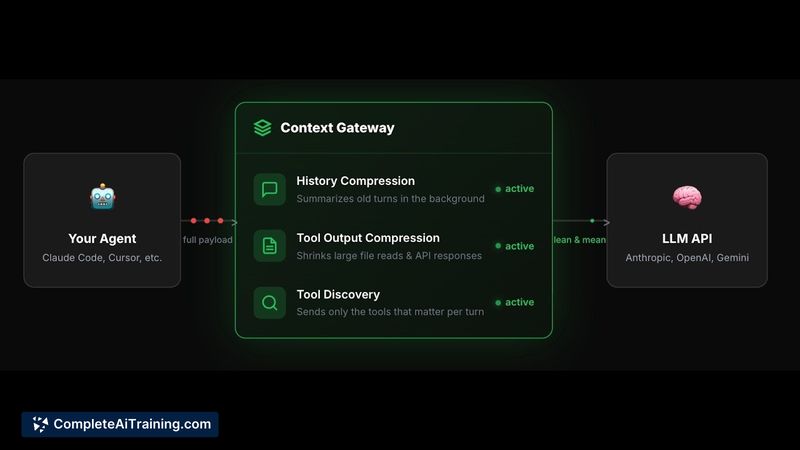

About Context Gateway

Context Gateway is a context compression proxy for LLM-based agents that reduces token spend and lowers latency by compressing tool outputs while preserving important context. It targets Claude Code, Codex, OpenClaw and similar agent toolchains, and the team reports setup takes less than a minute.

Review

Context Gateway addresses a common problem where agent tool calls return large, repetitive outputs that bloat context and increase cost and response time. It provides instant context compaction, a spend cap for Claude Code, Slack notifications, and other quality-of-life features to keep agent sessions lean and easier to manage.

Key Features

- Context compression proxy that reduces token spend and improves response times for agents like Claude Code, Codex, and OpenClaw.

- Instant context compaction (faster alternative to waiting for built-in compaction commands).

- Spend cap controls and optional Slack notifications to help manage costs and alert on large sessions.

- Open-source components available during launch, with the compression models themselves kept closed; models are free to use during the launch period.

- Simple setup that the team says takes less than a minute and a fixed compression ratio currently set to 0.5.

Pricing and Value

Context Gateway is free at launch and offers free use of the compression models during the initial period. The open-source parts of the project are available to inspect and extend, while the model weights remain proprietary. For teams and developers who run frequent or large agent sessions, the product can reduce token-related costs and shorten end-to-end latency, which may deliver clear ongoing savings depending on workload and how much the agent outputs are currently inflated by redundancy.

Pros

- Can materially reduce token spend and improve response speed for tool-heavy agent sessions.

- Fast setup and handy features like instant compaction and spend caps for operational control.

- Useful integrations such as Slack notifications make it practical for team workflows.

- Open-source components make it easier to audit and extend the proxy behavior.

Cons

- Compression currently treats structured and unstructured outputs the same, which can risk trimming useful structured fields; a more granular approach is planned but not yet available.

- The compression models are not open-source, which limits full transparency and on-premise control for some users.

- Compression ratio is fixed at 0.5 for now; auto-tuning by content type is planned but not yet implemented.

Context Gateway is well suited for developers and teams that run agent workflows with frequent tool calls and large outputs, and who want to reduce token costs and speed up sessions. It fits especially well as a lightweight proxy during development and early deployments, while users who need full model transparency or structured-data-aware compression may want to watch for upcoming updates before committing to production-wide rollout.

Open 'Context Gateway' Website

Your membership also unlocks: