About Free LLM API

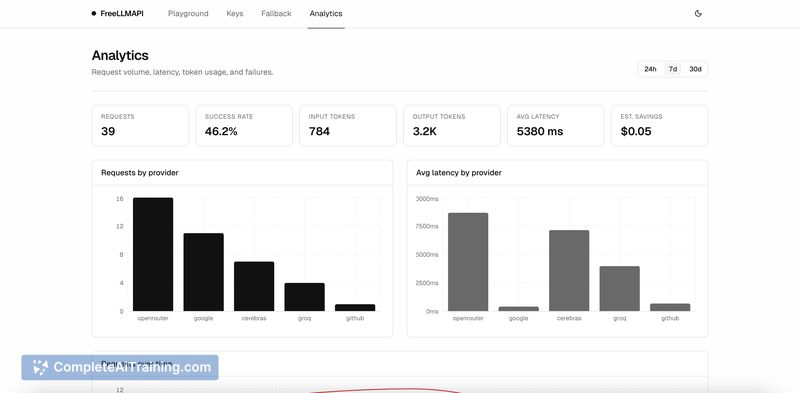

Free LLM API is an open-source, OpenAI-compatible proxy that aggregates free-tier API keys from approximately 14 language model providers. It offers unified routing, rate limiting, and automatic fallback so an experimenter can use a single endpoint instead of juggling multiple provider endpoints.

Review

This tool aims to simplify LLM prototyping by combining many providers' free quotas behind one API. It is presented as a personal experimentation utility rather than a production-grade service, and it works well for quick proofs of concept and learning projects.

Key Features

- Aggregates free-tier keys from multiple LLM providers (around 14), exposing a single API surface.

- OpenAI-compatible proxy interface for easier integration with existing code and tools.

- Automatic failover and routing to switch providers when quotas are hit.

- Built-in rate limiting to help manage combined usage across providers.

- Open source and self-hostable, so you can inspect the code and run your own instance.

Pricing and Value

The project is offered for free and is open source. The published materials mention access to a very large combined token allowance (the page cites up to 1 billion tokens per month as an aggregate figure depending on available free tiers). The core value is enabling experimentation without entering credit card details or managing billing dashboards. That said, users must still register for the individual providers' free plans and supply their keys, so some account setup is required.

Pros

- Free and open source, so there is no upfront cost to try it out.

- Consolidates multiple free quotas into a single endpoint for faster prototyping.

- No credit card required to take advantage of provider free tiers.

- OpenAI-compatible interface eases adoption with existing clients and code.

- Automatic fallback improves continuity when a provider hits its quota.

Cons

- Intended for personal experimentation; not recommended as a production-grade solution due to reliance on free tiers and variable quotas.

- Requires managing multiple provider accounts and API keys, which adds setup overhead.

- If using a hosted instance, key management and privacy require careful attention; self-hosting reduces that risk but adds operational work.

Overall, Free LLM API is well suited for hobbyists, students, and developers who want to prototype LLM integrations quickly without billing concerns. For production or commercial deployments, it is better used as a development aid or a reference implementation, with attention paid to security and service guarantees if it will be relied on long term.

Open 'Free LLM API' Website

Your membership also unlocks: