About Google Gemma 4

Google Gemma 4 is an open model family that delivers advanced reasoning, multimodal input handling, and agentic workflow features. It is optimized to run across a wide range of hardware-from mobile devices to GPUs-while keeping compute requirements comparatively modest.

Review

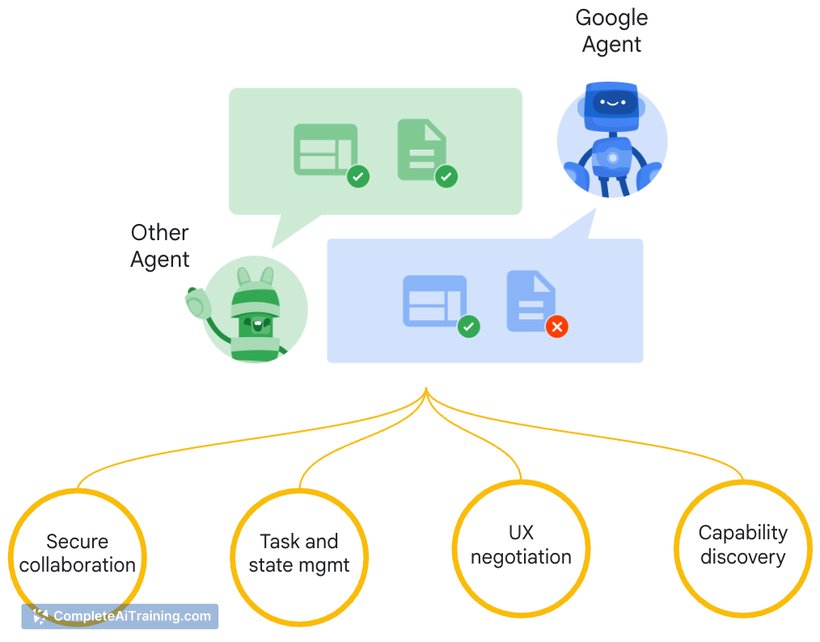

Gemma 4 is positioned as a capable open model for developers and teams that need long-context understanding, structured outputs, and support for images, audio, and video. Its combination of agentic capabilities (native function calling and structured JSON output) and a very large context window makes it practical for building assistants, coding tools, and multilingual applications.

Key Features

- Advanced reasoning: multi-step planning, math, and strong instruction following.

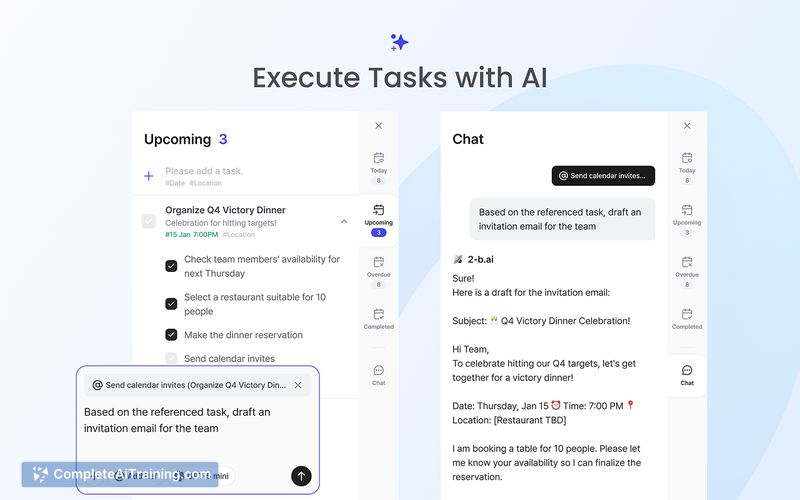

- Agentic workflows: native function calling, system instructions, and structured JSON outputs for tool integration.

- Multimodal inputs: support for images, video, and audio alongside text.

- Very long context window: support up to 256K tokens for large documents and codebases.

- Open licensing and hardware efficiency: model weights available under Apache 2.0 and optimized to run on phones, laptops, and GPUs.

Pricing and Value

The model family is released under an Apache 2.0-style open license, so model weights are available for download at no licensing cost. Running the model locally requires only the compute and storage resources you provide, which can reduce ongoing cloud costs for many use cases; conversely, hosted deployments will carry provider fees. For teams that need offline capability, fine-tuning, or full control over data flow, Gemma 4 offers strong value by combining high capability with lower compute overhead compared with many larger models.

Pros

- Strong multi-step reasoning and instruction-following with a large context window.

- Integrated agent features that simplify building tool-enabled workflows and structured outputs.

- Multimodal support enables more diverse application interfaces (image, video, audio).

- Open license and downloadable weights give developers control and flexibility for local or private deployments.

- Designed to run efficiently on a range of hardware, making offline and on-device scenarios practical.

Cons

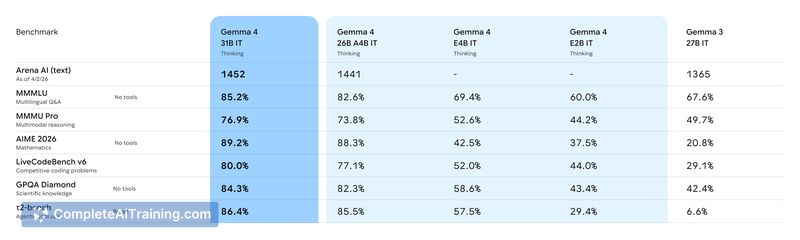

- Performance on very niche or highly specialized tasks may still lag the largest closed models; benchmarking is recommended for critical applications.

- Long agentic workflows with many tool calls can expose reliability challenges that need careful engineering and testing.

- Using the full 256K context effectively can require substantial memory and infrastructure for large-scale production workloads.

Overall, Google Gemma 4 is best suited for developers, startups, and organizations building AI agents, code assistants, multimodal apps, or privacy-first local deployments. Teams that need control over model behavior, offline capabilities, and strong long-context handling will find it particularly useful; teams focused solely on squeezing marginal gains from very large closed models should run comparative evaluations before committing.

Open 'Google Gemma 4' Website

Your membership also unlocks: