About IonRouter

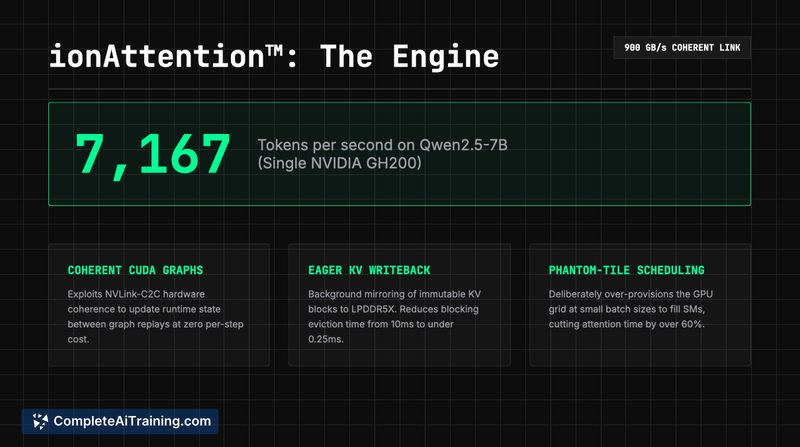

IonRouter is an AI inference and routing platform that provides a drop-in OpenAI-compatible API to access a range of open models for LLM, vision, video, and TTS workloads. It pairs multi-model support and finetune deployment with an internal inference engine (IonAttention) optimized for NVIDIA Grace Hopper to lower costs and reduce latency.

Review

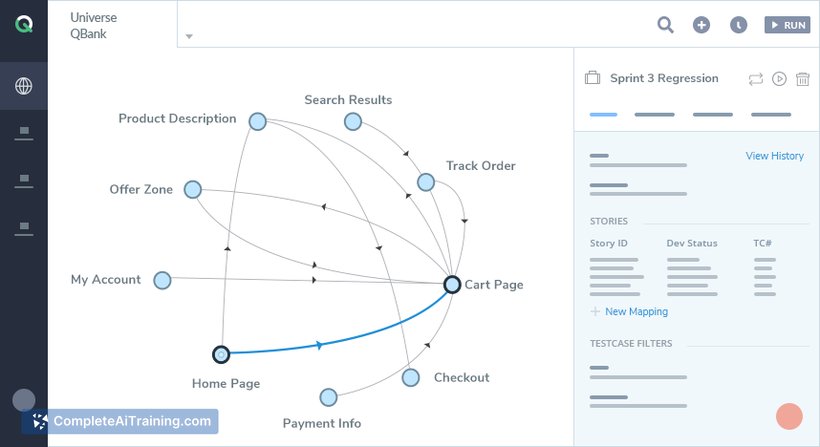

IonRouter targets teams that need to run multiple models and multi-modal applications without managing low-level infrastructure. The service promises lower per-token costs and faster response times by multiplexing models on optimized GPUs while handling scaling and optimization on behalf of users.

Key Features

- OpenAI-compatible API that can act as a drop-in replacement for existing integrations.

- Proprietary IonAttention inference engine optimized for NVIDIA Grace Hopper hardware to reduce latency and cost.

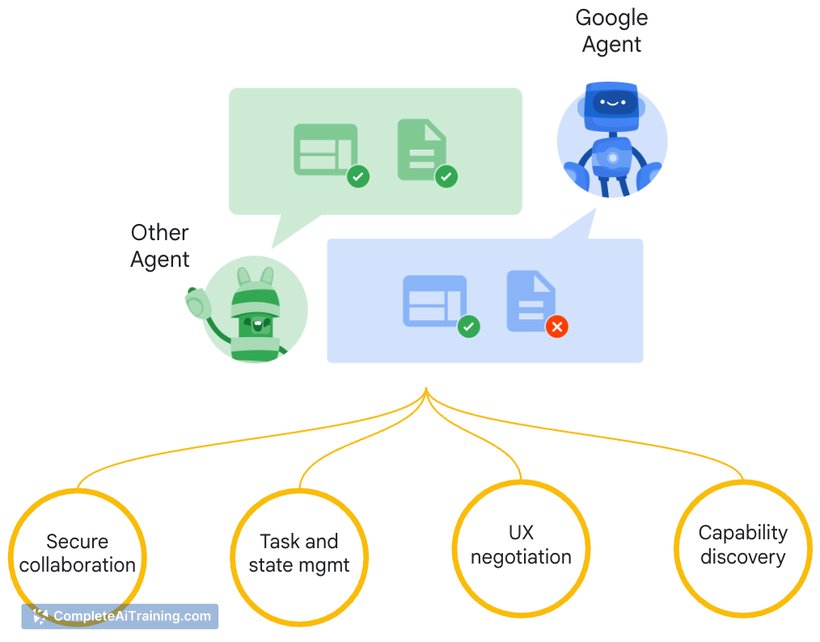

- Support for a broad set of model types: LLMs, vision, video, text-to-speech, agents, and deployed finetunes.

- Managed infrastructure with routing, optimization, and automatic scaling handled by the platform.

- Model multiplexing with claimed sub-100ms switch times between models to improve utilization.

Pricing and Value

IonRouter promotes usage-based API pricing and states that it can serve models at roughly half typical market rates by leveraging its custom inference engine and GPU pool. There are indications of free options or tiers, but specific limits and detailed pricing tiers are not listed publicly in the referenced material. The core value proposition is cost savings for teams running frequent or varied model workloads, particularly when multiple models or finetuned variants must be hosted and routed.

Pros

- Lower-cost claims make it attractive for teams with high inference volume or many models.

- Drop-in OpenAI-compatible API reduces integration friction for existing applications.

- Supports multi-modal use cases and hosting of finetuned models, plus managed scaling.

- Custom inference engine and model multiplexing can improve GPU utilization and latency.

Cons

- Newly launched product with limited public production track record; real-world stability and SLA details are still emerging.

- Performance and cost advantages depend on specific hardware optimizations (Grace Hopper), which may affect portability or vendor dependence.

- Detailed pricing tiers, quotas, and enterprise support terms are not fully documented in the available information.

IonRouter is a good fit for developer teams and startups that need an affordable, managed way to run multiple open models, multi-modal apps, or finetuned deployments while minimizing infrastructure overhead. For mission-critical deployments, run a performance and reliability pilot to validate latency, failover behavior, and cost under your expected production workload before full adoption.

Open 'IonRouter' Website

Your membership also unlocks: