About Linchpin

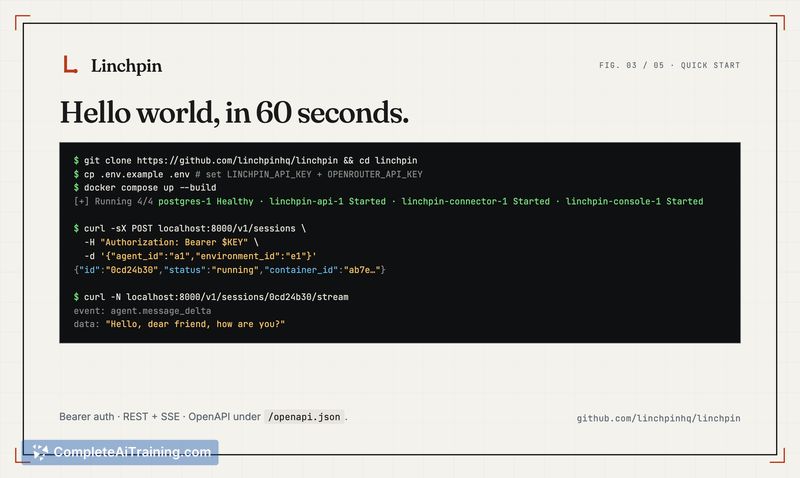

Linchpin is an open-source, self-hostable runtime for managed AI agents that runs under an Apache-2.0 license. With a single docker-compose command you get a running agent platform that exposes REST + SSE APIs, per-session Docker sandboxes, MCP tools, and encrypted credential vaults.

Review

Linchpin aims to fill the gap between libraries and a runnable control plane by providing a pre-wired runtime layer you can deploy on a VM. The project is early (v0.1) but includes a practical set of features for developers who want local control over agent execution and integrations with cloud or local model providers.

Key Features

- Quick deployment: one command docker compose up to get REST + SSE APIs, session/event persistence, and a small console.

- Per-session Docker sandboxes and environment templates to isolate agent runs.

- Built-in tools (bash, read, write, edit, glob, grep, web_fetch, web_search) and MCP support via stdio or HTTP.

- Fernet-encrypted credential vaults for storing secrets locally.

- Flexible model routing: use cloud providers via OpenRouter or local models via Ollama.

Pricing and Value

Linchpin is free and open-source under the Apache-2.0 license. For teams that prioritize privacy, control, and the ability to host their own agent runtime, it delivers strong value: a ready-made runtime that reduces the engineering effort needed to go from libraries to a running platform. Because model calls are proxied to external providers or local runtimes, infrastructure costs depend on the chosen model provider rather than on Linchpin itself.

Pros

- Open-source Apache-2.0 codebase that can be self-hosted and inspected.

- Fast setup that provides a full agent runtime (APIs, sandboxes, event log) with minimal wiring.

- Includes several useful built-in tools and an encrypted vault for credentials out of the box.

- Low base resource footprint: ~500 MB idle for the runtime and roughly ~300 MB per active sandbox task.

- Supports mixing cloud and local model providers (OpenRouter, Ollama), giving deployment flexibility.

Cons

- Early release limitations: single-tenant, single-user operation today, which limits multi-user or multi-team setups.

- Sandbox hardening is incomplete: no CPU/memory/cgroup caps, no exec timeouts, and no disk quotas yet.

- Missing enterprise features currently: no SSO/RBAC and no built-in observability dashboards.

For teams or developers who want an inspectable, self-hostable agent runtime and who can accept an early-release tradeoff set, Linchpin is a practical option that shortens the path from prototype to a running control plane. It suits privacy-conscious projects, solo developers, and small teams that prioritize local control and are comfortable adding additional hardening or multi-user controls as they scale.

Open 'Linchpin' Website

Your membership also unlocks: