About Locally AI + Qwen

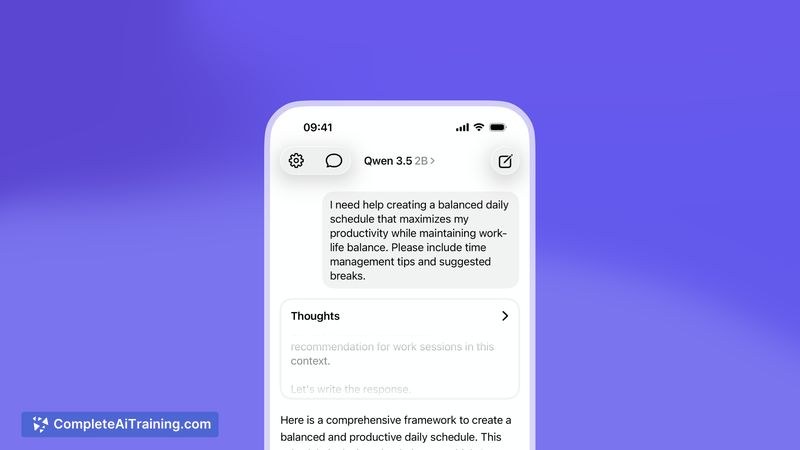

Locally AI + Qwen is a mobile and tablet application that runs advanced Qwen models directly on your device, enabling offline and private AI interactions without a login. It offers vision capabilities and a hybrid reasoning option, with several model size choices to match different hardware and use cases.

Review

This tool focuses on on-device inference, giving users control over data and reducing reliance on cloud services. The interface is native and straightforward, while the availability of multiple model sizes makes it flexible for different performance and storage constraints.

Key Features

- Run models fully offline with local-only processing and no account required.

- Vision-enabled models with a reasoning toggle to adjust inference behavior.

- Multiple model sizes (0.8B, 2B, 4B, 9B) so users can trade off capability and resource use.

- Native user interface aimed at simple chat and interaction flows.

- Optimizations for modern mobile processors to improve efficiency and responsiveness.

Pricing and Value

The listed launch version is free to use. For users prioritizing privacy and offline access, the no-cost entry provides clear value compared with paid cloud-based alternatives. Considerations include device storage for model downloads and potential impacts on battery and performance depending on the chosen model size.

Pros

- True offline operation that keeps data local and avoids cloud logins.

- Flexible model size options help adapt to different devices and needs.

- Vision plus reasoning modes expand the types of tasks it can handle.

- Simple native UI makes it easy to get started without complex setup.

- Good fit for privacy-focused workflows and experimentation with local inference.

Cons

- Larger models require substantial storage and may be limited to higher-end hardware.

- Inference speed and memory use vary by device; some users may need trial and error to find the best model choice.

- On-device limits mean it may not match the largest cloud models for some heavy-duty tasks.

Locally AI + Qwen is best for users who want private, offline AI on their phone, tablet, or laptop and are willing to manage model downloads and device resources. It suits power users, privacy-conscious individuals, and developers experimenting with local model inference who need a straightforward on-device experience.

Open 'Locally AI + Qwen' Website

Your membership also unlocks: