About OpenAI WebSocket Mode for Responses API

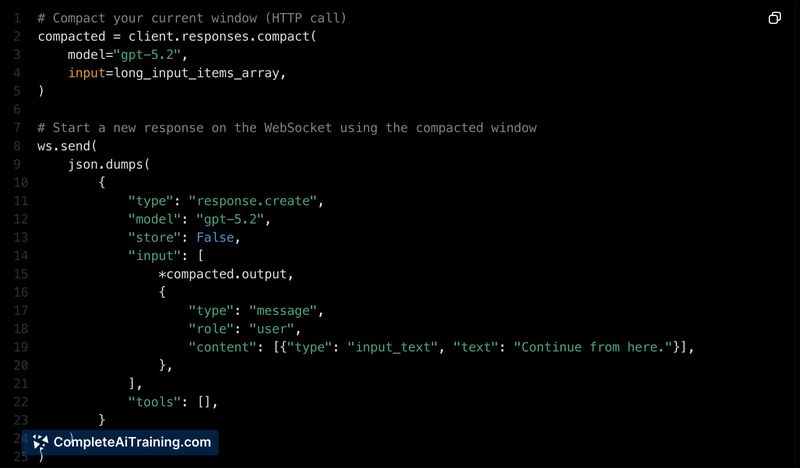

OpenAI WebSocket Mode for Responses API provides a persistent WebSocket connection to the Responses API so agent turns can send only incremental inputs instead of resending full context every time. The approach keeps session state in memory and is aimed at reducing end-to-end latency and bandwidth for workflows that make frequent tool calls.

Review

The WebSocket Mode targets use cases where repeated tool calls cause the model and infrastructure to reprocess the same context turn after turn. By switching to a single persistent connection and sending incremental updates, the feature can cut latency significantly on heavy agentic workflows while simplifying session state handling.

Key Features

- Persistent connection to /v1/responses to avoid new HTTP handshakes per turn.

- Incremental input transfer so only new information travels over the wire rather than the full conversation history.

- Session state maintained in memory so the model continues from the previous turn without reprocessing all prior context.

- Demonstrated latency reductions in production testing (roughly 39% on complex multi-file tasks, up to 50% in some cases).

- Plays well with server-side compaction to extend usable agent runtime without hitting context-size limits.

Pricing and Value

The launch page mentions free options, and billing will generally follow the Responses API and associated usage metrics. The primary value is operational: lower network and compute overhead for heavy, multi-turn agent workflows which can translate into cost savings at scale. For short, single-turn requests the WebSocket handshake adds a small initial overhead, so the cost-benefit depends on workload pattern and session length.

Pros

- Substantial latency reduction for workloads with frequent tool calls and multi-turn interactions.

- Less bandwidth and compute wasted by avoiding repeated context resends.

- Simpler session continuity because state can be kept in memory across turns.

- Enables longer-running agent sessions when combined with server-side compaction.

Cons

- WebSocket handshake introduces slight initial overhead, making it less advantageous for brief, simple requests.

- Requires connection lifecycle management (reconnects, resume behavior) and changes to existing agent infrastructure.

- Behavior and recovery on connection drops must be considered and tested for each integration.

Overall, OpenAI WebSocket Mode for Responses API is best suited for teams running agentic coding tools, browser or desktop automation loops, and orchestration systems where per-turn latency and repeated context transmission are major pain points. For light, single-turn use cases the benefits are marginal, so evaluate based on session patterns and operational priorities.

Open 'OpenAI WebSocket Mode for Responses API' Website

Your membership also unlocks: