About Pioneer

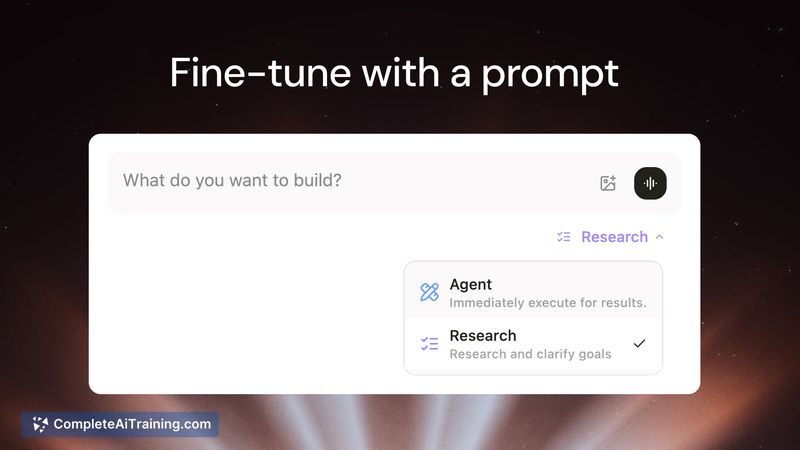

Pioneer is a platform for quickly fine-tuning language models using a single natural-language prompt. It automates data generation, training, evaluation, and deployment so teams can produce specialized models with minimal ML engineering effort.

Review

Pioneer compresses the typical fine-tuning workflow into an automated pipeline that promises results in minutes rather than weeks. The platform focuses on small, specialized models (SLMs) and provides mechanisms for continuous refinement from live inference data while offering export options for local use on higher-tier plans.

Key Features

- One-prompt fine-tuning: describe the task in plain English and Pioneer generates synthetic training data and runs training, evals, and deployment.

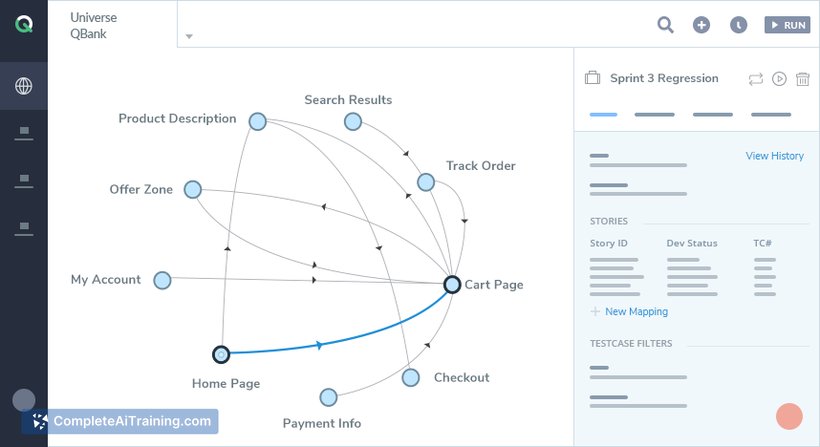

- End-to-end automation: data generation, hyperparameter selection, training on cloud GPUs, evaluation against benchmarks, and deployment are handled by the platform.

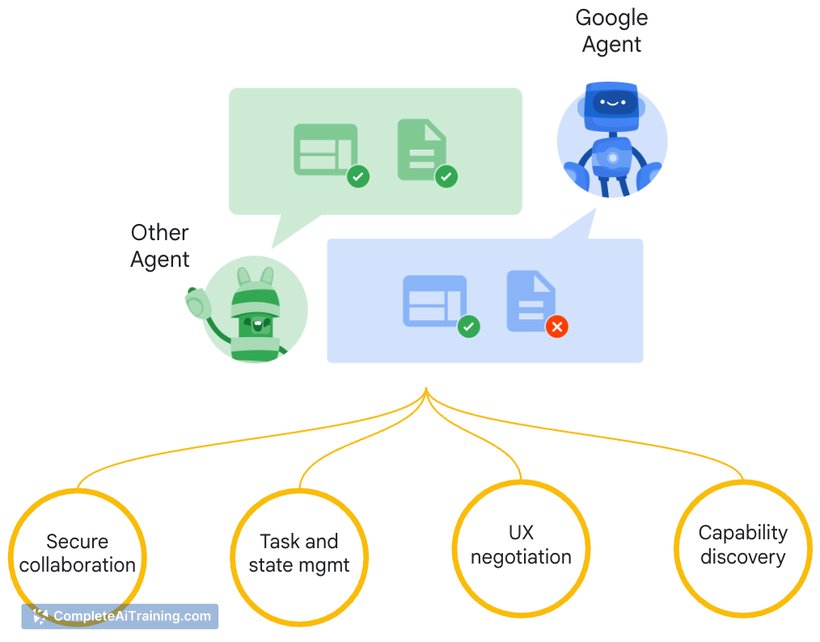

- Continuous improvement: deployed models are monitored and retrained from inference traces with curated data, regression checks, and rollback safeguards.

- Small-model focus: benchmarks claim fine-tuned SLMs can match or exceed larger models on specific tasks with lower latency and cost.

- Export and integrations: API access and a Pro subscription option that allows downloading model weights for local inference and self-hosting.

Pricing and Value

Pioneer offers free options and a promotional three-month free inference period. The platform is positioned with tiered plans, including a Pro level that unlocks model weight downloads for local deployment. The value proposition centers on reducing the time and engineering overhead required to produce production-ready specialized models and lowering inference cost and latency by using smaller models where appropriate.

Pros

- Significantly lowers technical barriers: prompt-driven workflow makes fine-tuning accessible to non-expert users.

- Fast turnaround: full pipeline claims to complete fine-tuning and deployment in minutes for many tasks.

- Automated quality controls: curated live data ingestion, hard negatives, held-out confirmation sets, multiple-run comparisons, and rollback reduce the risk of regressions.

- Cost and latency benefits for task-specific models compared with using large general-purpose models.

- Pro plan export enables local inference for teams with on-prem or privacy requirements.

Cons

- Core fine-tuning is platform-hosted by default; truly self-hosted fine-tuning requires the Pro export path and additional setup.

- Synthetic-data generation can introduce blind spots if not sufficiently diverse; automated safeguards help, but careful validation is still necessary.

- Teams with strict compliance or data residency needs will need to verify whether the hosted pipeline and data handling meet their policies.

Overall, Pioneer is best suited for product teams, developers, and small ML groups that want to deploy specialized models quickly without building a full fine-tuning stack. It is particularly useful for projects that benefit from lower-cost, low-latency models and for teams that value iterative improvement with automated safeguards.

Open 'Pioneer' Website

Your membership also unlocks: