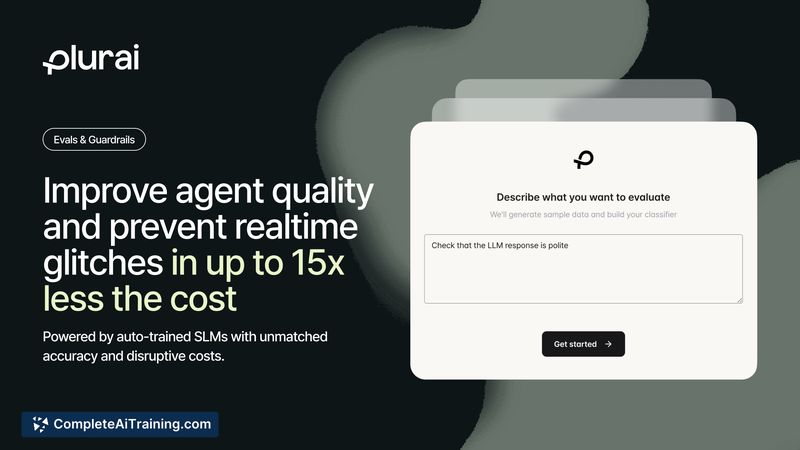

About Plurai

Plurai is a platform for building real-time evaluation and guardrail models for AI agents using a method the provider calls "vibe-training." It generates synthetic training examples from a plain-language task description, validates labels via multi-agent debate, and deploys a small language model that runs with low latency for on-every-interaction checks.

Review

Plurai focuses on replacing expensive LLM-as-judge workflows with compact, purpose-trained models that evaluate every interaction rather than a sampled subset. The platform emphasizes fast inference (sub-100ms), lower operating cost, and an automated pipeline that aims to work without hand-labeled datasets or a separate annotation process.

Key Features

- Vibe-training from a task description: create evals and guardrails without labeled data or an annotation pipeline.

- Multi-agent debate validation: generated cases are filtered and validated by an automated consensus process before training.

- Small language model deployment: claims sub-100ms latency and substantially lower cost than using a large LLM as the judge.

- Always-on evaluation: intended to evaluate every interaction instead of relying on sampling-based checks.

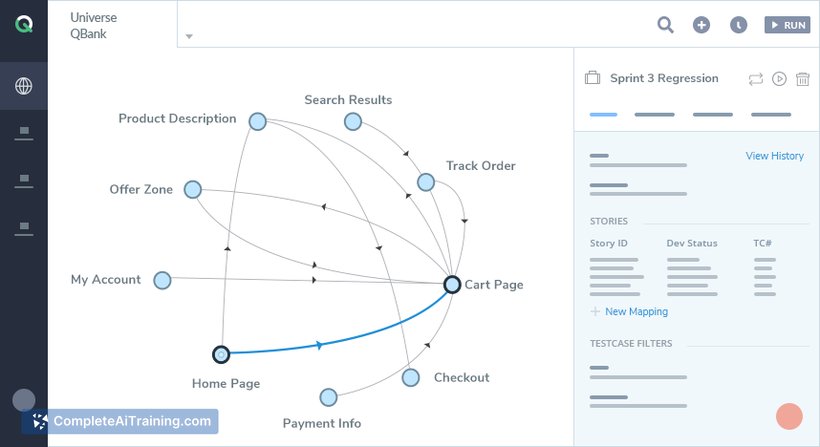

- API and developer-focused tooling for integration into agent pipelines and monitoring flows.

Pricing and Value

Specific pricing tiers are not listed here; the product page indicates a free trial is available at the application site. The offering positions itself as cost-efficient for large-scale evaluation workloads, citing metrics such as roughly 8x lower cost compared with a large LLM used as judge and claims of over 43% fewer failures in their comparisons. The main value proposition is the ability to run consistent, low-latency evaluations on every interaction, which can reduce blind spots that sampling-based systems miss.

Pros

- Enables real-time, per-interaction evaluation with low latency, which helps surface failures that sampling would miss.

- Removes the need for a manual labeling pipeline by synthesizing and validating training data automatically.

- Cost claims indicate significantly cheaper operation versus an LLM-as-judge approach for large volumes.

- Includes an automated validation step (multi-agent debate) to improve label quality before training.

- API-focused and built for engineering workflows, making integration into production pipelines straightforward.

Cons

- New product with limited public reviews and real-world deployments available for independent assessment.

- Currently focused on LLM-based text evaluation; support for additional modalities like vision is under development.

- Like any automated eval system, it may require initial iterations to capture subtle or domain-specific violations precisely.

Plurai is a good fit for engineering teams that need continuous, low-latency evaluation and guardrails for conversational agents or other LLM-driven features, especially when sampling-based checks are insufficient. Early adopters should run a pilot to validate the platform's effectiveness on their specific task and to tune the initial spec and iteration loop.

Open 'Plurai' Website

Your membership also unlocks: