About Query Memory

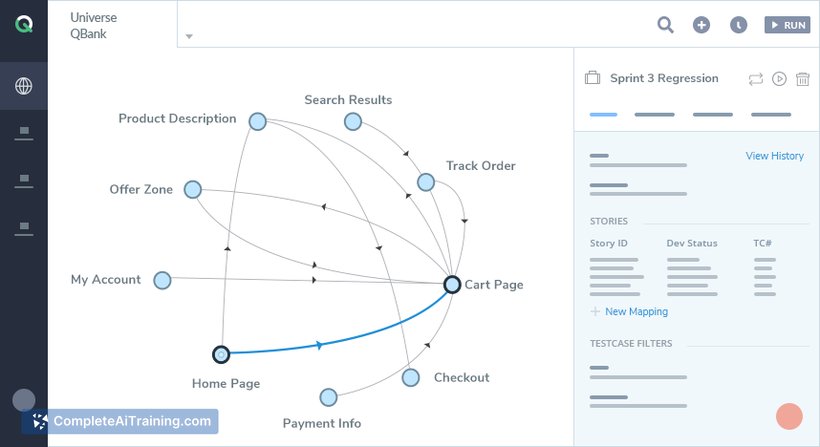

Query Memory is a platform that transforms documents, websites, and files into a queryable knowledge base for AI agents. It offers a single API and a built-in chat interface while handling parsing, chunking, embeddings, and retrieval so teams can focus on agent behavior instead of RAG infrastructure.

Review

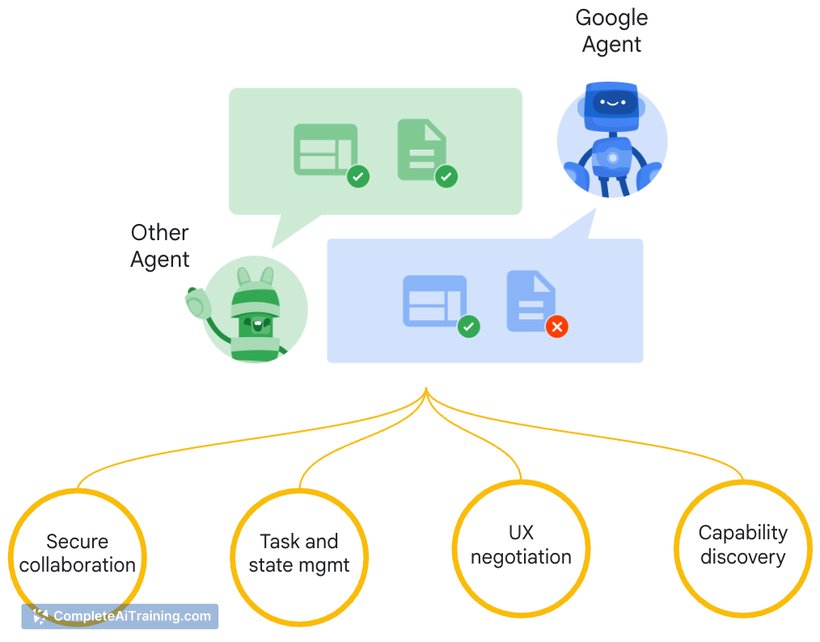

Query Memory targets developers and teams building AI agents that need reliable access to external knowledge. By abstracting the usual steps of creating a retrieval pipeline, it aims to shorten development time and simplify integration of document-backed intelligence into agents.

Key Features

- Single unified API for uploading sources and querying assembled knowledge.

- Automatic parsing, chunking, embedding generation, and retrieval management.

- Support for uploading files and connecting web sources to build knowledge bases quickly.

- Built-in chat interface and the ability to attach knowledge directly to AI agents.

- Synchronization options: automatic sync for live integrations and manual uploads for standalone files.

Pricing and Value

At launch there is a free tier available for initial experimentation. The product appears to follow an API usage and storage model common to similar services, with paid plans expected for higher throughput, larger storage, or enterprise needs. The main value is the time saved by offloading parsing, embedding, and retrieval work, which can reduce engineering effort when adding document access to agents.

Pros

- Removes much of the plumbing required to build RAG-style agent memory, speeding prototyping.

- Simple API and chat interface make it straightforward to attach knowledge to agents.

- Handles end-to-end document processing (parsing → chunking → embeddings → retrieval).

- Supports multiple input types (documents and web sources) so teams can consolidate knowledge in one place.

- Automatic synchronization for live data sources reduces manual upkeep for connected systems.

Cons

- Details on pricing at scale and enterprise SLAs are limited at launch, which may complicate budgeting for large deployments.

- Less visibility into or control over low-level chunking and embedding choices compared with building a custom pipeline.

- As an early-stage offering, advanced enterprise features (audit logs, fine-grained access controls, compliance certifications) may be limited or in development.

Query Memory is well suited for developers and small teams who need to add document-backed knowledge to AI agents quickly, or for prototyping retrieval-augmented workflows without investing in custom infrastructure. Larger organizations with strict control or compliance needs should evaluate the available controls and pricing before committing to production use.

Open 'Query Memory' Website

Your membership also unlocks: