About QuickCompare by Trismik

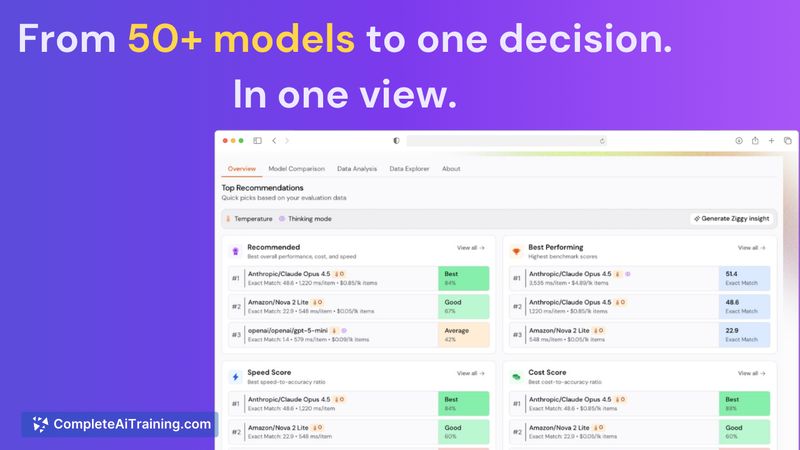

QuickCompare by Trismik is a cloud tool for evaluating large language models on your own data. It runs multiple models in parallel and reports side-by-side metrics for output quality, inference cost, and latency so teams can pick the best fit for their specific tasks.

Review

QuickCompare aims to replace ad-hoc model testing with a repeatable workflow that scores models against real task data. The platform includes guidance for setting up evaluations and returns detailed comparisons so teams can see trade-offs between accuracy, cost, and speed.

Key Features

- Upload your dataset and run comparisons across 50+ models in a single workflow.

- Side-by-side reporting of output quality, cost per call, average latency, and tail latency.

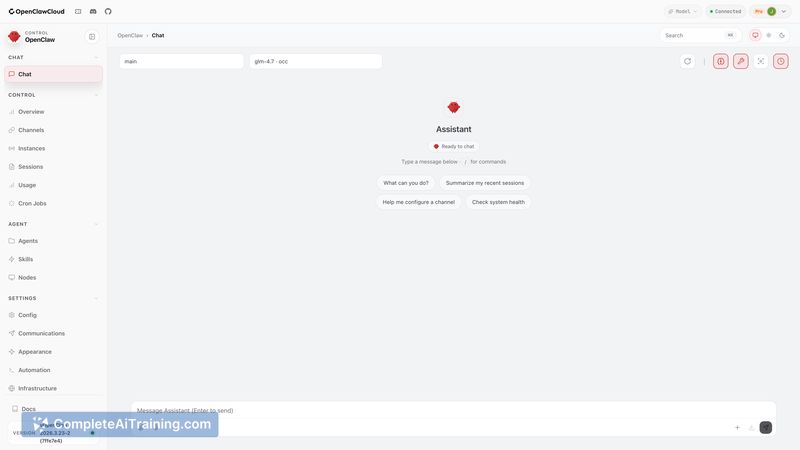

- Built-in assistant that suggests evaluation metrics, input templates, and judge setups for open-ended tasks.

- Per-slice breakdowns to show how models perform on easy versus hard subsets of your data.

- Parallel execution to speed up large-scale comparisons without building custom scripts.

Pricing and Value

At launch QuickCompare offers a free tier with promotional credits to try the service. Beyond the trial, usage is billed based on evaluation runs and the models you select; full pricing details are available on the product website. The core value proposition is reducing guesswork and inference spend by showing which models are cost-effective for your actual prompts and tasks rather than relying on generic benchmarks.

Pros

- Evaluates models on your real data, giving more relevant signals than public benchmarks.

- Combines quality, cost, and latency in one view so trade-offs are visible and actionable.

- Guided setup lowers the barrier for teams without deep evaluation expertise.

- Supports many models and runs them in parallel to save engineering time.

Cons

- Can be more than is needed for single-developer projects that stick with one model.

- Free credits are limited at launch, so larger or repeated evaluations will incur cost.

- Getting the best results requires a representative dataset and some choices about metrics and judge prompts.

QuickCompare is best suited to product and engineering teams, data scientists, and evaluation-focused engineers who need evidence-driven model selection before deployment or migration. Smaller projects or solo builders who rarely switch models may find it less essential, but any team paying significant inference costs should find the comparative insights valuable.

Open 'QuickCompare by Trismik' Website

Your membership also unlocks: