About Reka Edge

Reka Edge is a 7B vision-language model engineered for physical AI applications, pairing a ConvNeXt V2 encoder with an image-token efficiency that cuts input tokens by about threefold. It targets low-latency processing for video analysis, object detection, and agentic tool use, and is offered with open-source and free options for deployment.

Review

Reka Edge strikes a clear balance between model capability and deployability, focusing on scenarios where latency and on-device operation matter. The implementation choices-convolutional encoder, token efficiency, and deployment options-make it a practical pick for teams building vision-driven systems outside large cloud-only setups.

Key Features

- 7B vision-language model with a ConvNeXt V2 visual encoder for efficient image representation.

- Uses roughly 3x fewer input tokens for images, reducing compute and memory requirements.

- Sub-second latency for video analysis and real-time object detection and grounding.

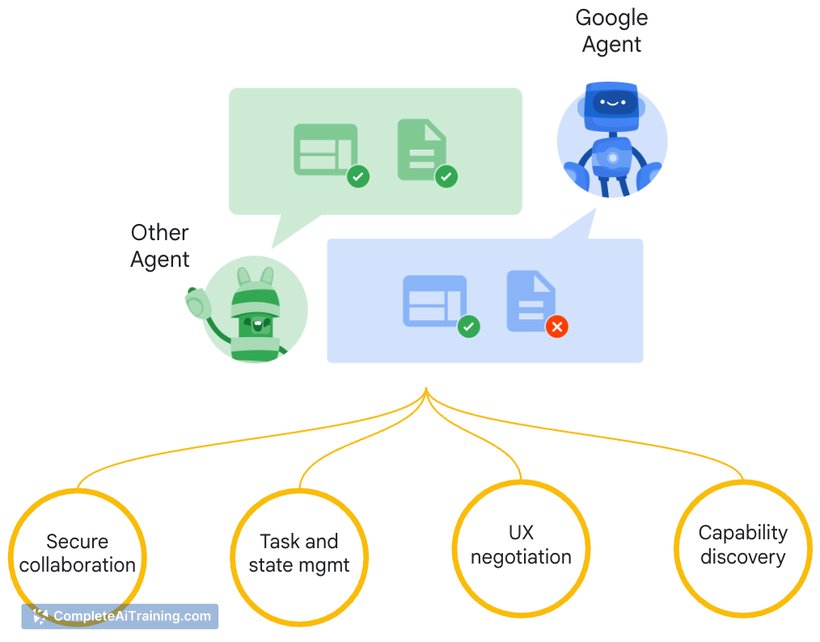

- Agentic tool use and video understanding capabilities aimed at physical AI tasks.

- Multiple deployment paths: playground, API, self-hosting (e.g., Hugging Face with vLLM), and routing via OpenRouter; open-source availability.

Pricing and Value

Reka Edge is listed with free options and an open-source distribution, which lowers the barrier to experimentation and self-hosted deployment. For teams that can self-manage infrastructure, self-hosting can significantly reduce recurring cloud costs compared with large commercial endpoints. Organizations that prefer managed services can use API or OpenRouter access, trading lower operational overhead for usage fees. Overall value is strongest for projects that prioritize low latency, local deployment, and cost control over maximizing absolute model size.

Pros

- Efficient image tokenization and a convolutional encoder reduce inference cost and latency.

- Designed for real-time video and object grounding tasks common in physical AI systems.

- Open-source and multiple hosting options provide flexibility for research and production use.

- Sub-second performance makes it suitable for robots, drones, wearables, and similar devices.

Cons

- At 7B parameters, it may be less capable than much larger models for highly complex reasoning or subtle multimodal tasks.

- Edge deployment still requires appropriate hardware and engineering effort to meet power and latency targets.

- Community and ecosystem maturity may lag behind longer-established models and toolchains.

Reka Edge is best suited for teams building vision-driven, latency-sensitive systems such as robotics, drones, automotive sensing, and wearables, or for researchers who want a self-hostable VLM for video and object tasks. For projects that need maximum model capacity for complex multimodal reasoning, a larger hosted model may still be preferable, but Reka Edge offers a practical trade-off for on-device and cost-conscious deployments.

Open 'Reka Edge' Website

Your membership also unlocks: