About Step 3.5 Flash

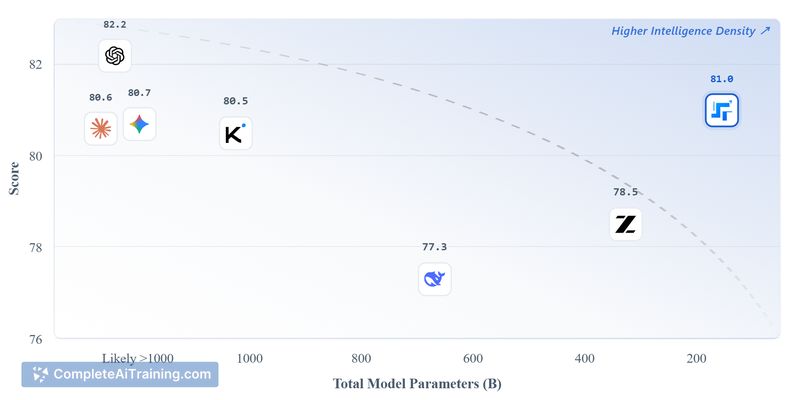

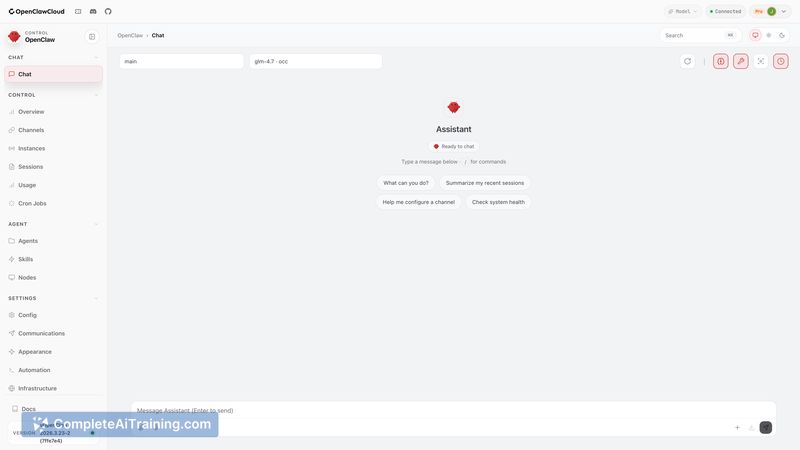

Step 3.5 Flash is a 196B sparse Mixture-of-Experts (MoE) model that activates roughly 11B parameters per token. It is released as an open-source option with native integration for OpenClaw agents, aimed at efficient agentic and coding workflows.

Review

This review summarizes the model's capabilities, performance signals, and practical trade-offs based on launch notes and early usage reports. Step 3.5 Flash stands out for its parameter-efficiency during inference and its direct support for agent loops, but deploying and serving an MoE model carries operational considerations.

Key Features

- 196B sparse MoE architecture with ~11B active parameters per token for lower per-token compute.

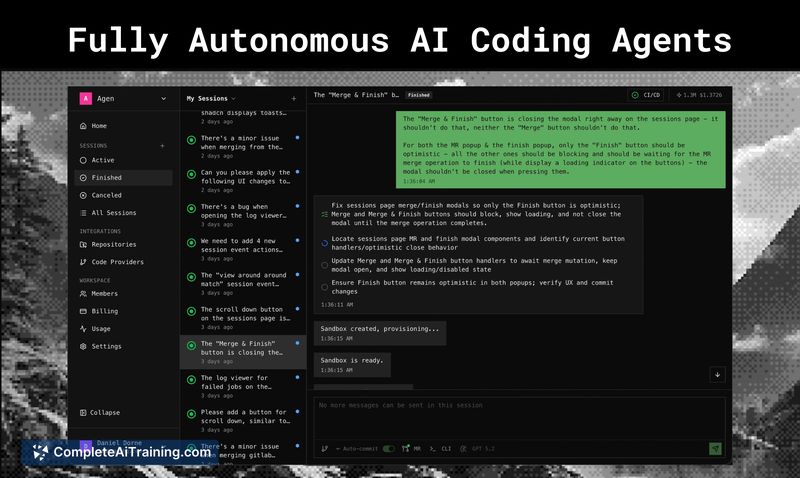

- Seamless native OpenClaw integration to support agent-driven workflows and multi-step loops.

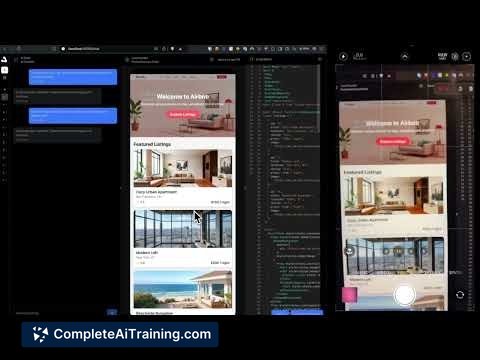

- MTP-3 throughput claims for coding workloads (reported up to ~350 tokens/second).

- Strong evaluation signals (reported ~74.4% on SWE-bench) and reliable long-context handling.

- Open-source availability with options to test via OpenRouter free quota or the official API.

Pricing and Value

The model is available as an open-source release with free testing options through OpenRouter's quota and access via an official API. Value comes from reduced compute per active token compared with dense models, which can lower runtime costs for extended agent loops and coding tasks. That said, managed API access or specialized serving infrastructure may be required for production use, which could affect total cost of ownership.

Pros

- Efficient parameter activation reduces compute and latency per token for many tasks.

- Good fit for agentic workflows due to native OpenClaw support.

- High coding throughput and competitive benchmark performance for software engineering tasks.

- Handles long contexts well, which benefits multi-step agent interactions and extended prompts.

- Open-source distribution makes it accessible for experimentation and custom deployments.

Cons

- MoE serving requires routing and infrastructure that can be more complex than dense models.

- The overall model size and MoE architecture can make local deployment and fine-tuning more difficult for small teams.

- Tooling and ecosystem support are still maturing compared with long-established model offerings.

Overall, Step 3.5 Flash is a strong option for developers, research teams, and organizations building serious agentic workflows or automated coding systems who can accommodate MoE-serving requirements. Smaller teams or those without compatible serving infrastructure may prefer simpler, fully dense models for easier local deployment.

Open 'Step 3.5 Flash' Website

Your membership also unlocks: