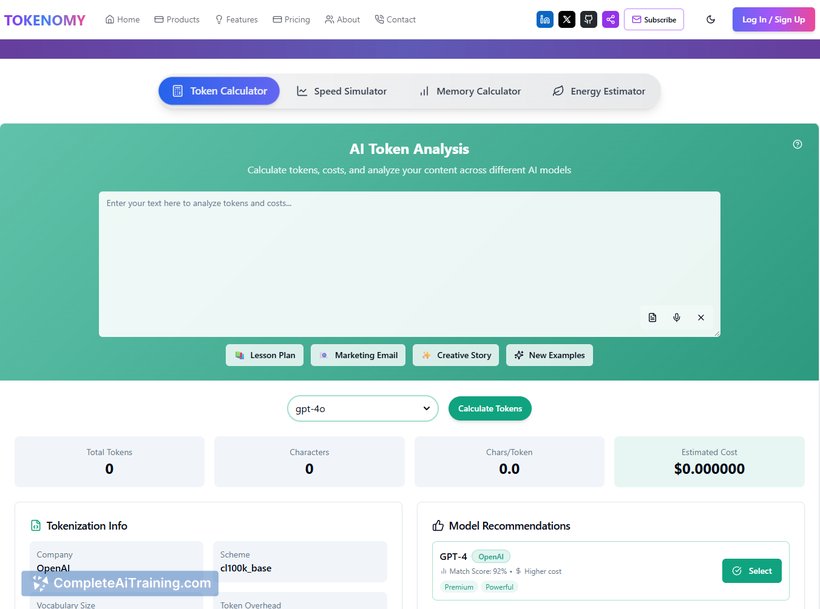

About Tokenomy.ai

Tokenomy.ai is a developer-focused tool that predicts token usage and estimated costs for large language models such as GPT-4o and Claude before an API call is made. It integrates as a VS Code sidebar, CLI, and LangChain callback, providing real-time insights to help manage and reduce unexpected expenses.

Review

Tokenomy.ai addresses a common challenge faced by developers working with language models: unpredictable token consumption and associated costs. By offering upfront cost estimates, it enables teams to monitor and control their spending more effectively, promoting budget-conscious development workflows.

Key Features

- Real-time token usage and cost prediction for multiple LLMs like GPT-4o and Claude.

- Integration with VS Code as a sidebar for seamless developer experience.

- Command Line Interface (CLI) support for flexible usage in various environments.

- LangChain callback integration to provide cost estimates within workflows.

- Cost-saving tips and alerts to prevent unexpected high bills.

Pricing and Value

Tokenomy.ai offers a free launch model, with early supporters gaining access to a 30-day Pro version trial using a special code. This approach allows developers and teams to evaluate the tool’s value without upfront costs. Given the potential for significant savings by preventing expensive token overuse, the tool presents strong value for those heavily utilizing LLM APIs.

Pros

- Helps prevent surprise billing by estimating token usage before execution.

- Supports popular development environments and workflows through VS Code and CLI.

- Provides actionable cost-saving recommendations.

- Easy to integrate with existing LangChain-based projects.

- Free trial period encourages hands-on evaluation.

Cons

- Currently focused mainly on a limited set of LLMs, which may restrict broader applicability.

- New product with limited user reviews and community feedback at this stage.

- Advanced features and pricing details beyond the trial period are not fully transparent yet.

Overall, Tokenomy.ai is well-suited for developers and teams who frequently interact with large language model APIs and need better visibility into token consumption and costs. It is especially useful for those working in environments like VS Code or using LangChain, aiming to keep API expenses predictable and manageable.

Open 'Tokenomy.ai' Website

Your membership also unlocks: