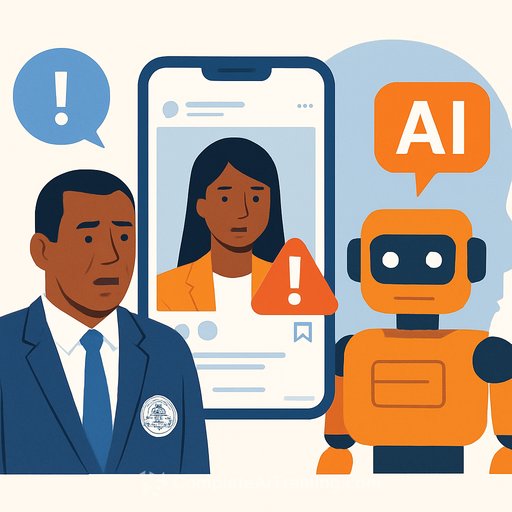

FBI Warns of AI-Powered Scams Targeting U.S. Government Officials

The FBI issued an alert on May 15 about a growing scam where hackers impersonate senior U.S. government officials using AI-generated voice and text messages. This campaign, which began in April and remains active, aims to trick government officials and their contacts into revealing personal account credentials.

Attackers use text messages or AI-created voice calls that convincingly mimic high-ranking officials. Their primary targets are current and former federal and state leaders, as well as those connected to them. The FBI stresses caution: if you receive a message claiming to be from a senior U.S. official, don’t assume it’s genuine without verification.

AI and Deepfake Technology in Scams

This case highlights how scammers employ AI and deepfake technology to replicate voices and appearances with high accuracy. As AI models improve, phishing campaigns become more sophisticated and harder to detect.

A notable example occurred during the 2024 New Hampshire presidential primary. A deepfake audio of President Joe Biden was circulated, urging Democrats not to vote. The political consultant behind this scam was fined $6 million by the Federal Communications Commission and is under criminal investigation for attempting to influence voter turnout.

Real-World Impact on Individuals and Organizations

Beyond government targets, deepfake scams increasingly affect various sectors. On May 13, the co-founder of Polygon, Sandeep Narwal, revealed that scammers impersonated him using AI-generated voices during Zoom meetings. They hijacked a team member’s Telegram account to lure victims into fake calls and attempted to install malicious software.

Narwal warned that scammers disable audio, then pressure victims into installing harmful scripts. He also highlighted the difficulty in reporting such incidents on platforms like Telegram, urging better mechanisms for flagging fraudulent accounts.

Others in the Web3 community have reported similar deepfake impersonation attempts, illustrating the growing threat across different industries.

How Government Employees Can Protect Themselves

- Always verify the identity of anyone claiming to be a senior official before responding.

- Examine sender information carefully for inconsistencies or errors.

- Look out for unnatural features in images or videos, such as distorted body parts or faces.

- Avoid clicking on suspicious links or sharing sensitive information with unknown contacts.

- Use two-factor or multifactor authentication on all accounts wherever possible.

- Never install software or scripts during unsolicited online interactions.

The FBI warns that AI-generated content is becoming increasingly difficult to distinguish from real communication. If you’re unsure about a message's legitimacy, contact your agency’s security team or reach out to the FBI for assistance.

For further learning on AI threats and security measures, explore relevant courses on Complete AI Training.

Your membership also unlocks: