Maine Government Adopts Cautious Approach to AI, Requiring Human Oversight

Maine's executive branch agencies can now use generative AI tools, but only under strict conditions that require human review of all outputs and ban the entry of confidential data into external systems.

The Maine Office of Information Technology issued a formal policy in early 2024 after conducting a nine-month review of the technology's security and privacy risks. The policy allows limited use of AI chatbots and automated content systems while placing safeguards around sensitive government information.

The central restriction: employees cannot input confidential or restricted government data into generative AI systems unless the technology has been formally approved through the state's review process. This prevents sensitive information from being transmitted to external systems that may store data outside the state's secure networks.

All AI-generated work must pass human review before use in government operations. Generative AI systems can produce inaccurate or fabricated information-a problem known as "hallucinations"-so employees remain responsible for verifying accuracy.

How the Policy Came Together

Maine's caution stems from the rapid rise of tools like ChatGPT in 2023. State cybersecurity staff temporarily barred executive branch agencies from using generative AI while they studied the technology's implications.

"In June 2023, Maine's Office of Information Technology imposed a six-month moratorium on the use of Generative AI in executive branch agencies," said Sharon Huntley, director of communications for the Department of Administrative and Financial Services. The pause was extended three additional months to allow time for risk assessments.

The state examined security and privacy threats, potential bias and ethical issues, and federal guidance before developing the policy framework. Cybersecurity leaders warned that AI systems can produce unpredictable results and expose government data if used improperly.

Gov. Janet Mills signed an executive order in 2024 creating a statewide artificial intelligence task force to study the technology's effects on Maine's economy, workforce, and public institutions across sectors like healthcare, education, manufacturing, and government operations.

Schools and Police Take Similar Cautious Approaches

Educators and law enforcement are following similar patterns, using AI for specific tasks while maintaining human control.

Cherry Poirier, a staff member at Spruce Mountain Middle School in Jay, uses AI to create differentiated materials for students in special education. But she does not allow students to use the technology for their own work.

"In our school, the students are not allowed to use it for their work as they still need to develop critical thinking and foundational skills," Poirier said. She also flagged broader concerns about environmental impact and cognitive effects from overuse.

Farmington Police Chief Kenneth Charles said the department uses AI for processing public records requests under Maine's Freedom of Access Act, specifically for redacting restricted information. But he stressed the technology is "not fail-safe."

"While it may be a useful tool, the employee is still responsible for their work product," Charles said. The department is developing a formal policy to ensure employees remain accountable for accuracy and data protection.

What Government Agencies Can Use AI For

Maine's policy acknowledges that AI may eventually provide benefits for government operations. The technology can analyze large volumes of information, summarize documents, and assist with repetitive administrative tasks.

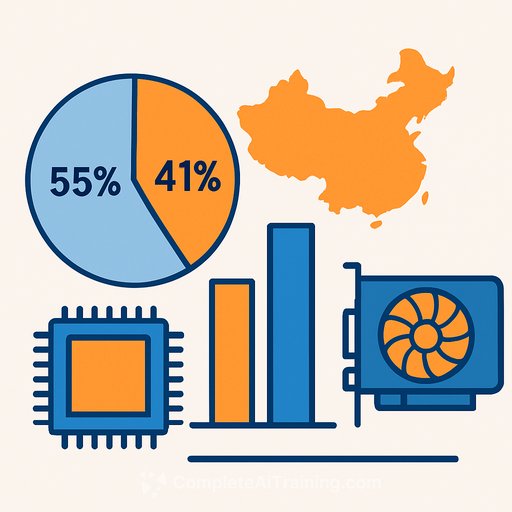

Agencies seeking to use new AI tools must go through a formal review process with the MaineIT Architecture and Policy Team. The state uses a classification system based on federal cybersecurity standards to limit AI use to low-risk information.

Huntley said the policy requires what officials call "the Human in the Loop" as a compensating control in all cases. MaineIT also evaluates whether AI systems can produce incorrect results and checks the product's internal controls to guard against failures.

For now, Maine treats artificial intelligence as a tool to be studied and carefully tested rather than widely deployed across government operations. "MaineIT and State of Maine use of Generative AI tools will continue to evolve and expand while carefully ensuring the safety and security of the data and resources that Maine people entrust in our care," Huntley said.

Learn more about AI for Government and Generative AI and LLM systems.

Your membership also unlocks: