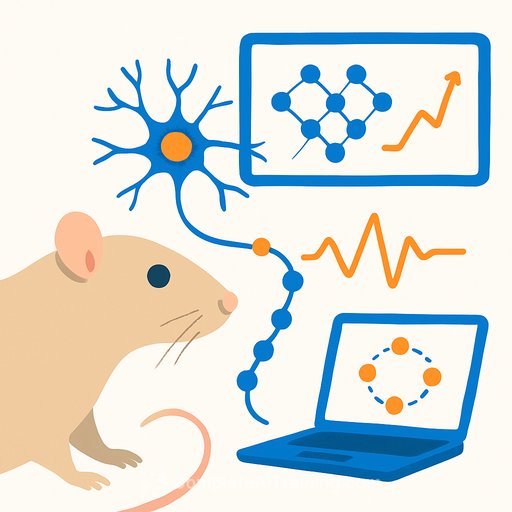

Living Rat Neurons Learn to Generate Complex Math Patterns

Researchers at Tohoku University and Future University Hakodate have trained cultured rat brain cells to perform machine learning tasks typically handled by artificial systems. The neurons successfully learned to generate complex time-series signals, including the Lorenz attractor-a chaotic mathematical pattern used to model weather systems.

The work, published in the Proceedings of the National Academy of Sciences on March 12, 2026, demonstrates that biological neural networks can function as computational resources. The team integrated living neurons into a "reservoir computing" framework, a machine learning approach that exploits the natural complexity of a network to process data.

How the System Works

Reservoir computing doesn't train every neuron in a network. Instead, it trains only the "readout" layer-the part that interprets the network's activity. The researchers applied a technique called FORCE learning, which adjusts output signals in real-time based on errors. This was the first successful application of FORCE learning to biological neurons.

The key technical innovation was using microfluidic devices-tiny channels that guide how neurons grow. By creating modular neighborhoods of cells, the researchers prevented neurons from firing in unison, which would destroy the complex dynamics needed for computation.

What the Neurons Learned

The biological network didn't just reproduce simple sine waves. It generated sine waves, triangular waves, square waves, and chaotic Lorenz attractors. The same system learned to produce waves with periods ranging from 4 to 30 seconds, showing that living networks adapt flexibly to different tasks.

Why This Matters for Research

Biological systems process information in parallel-many computations simultaneously-with minimal energy consumption. Artificial neural networks require significant power to match this efficiency.

The platform could extend beyond pure computation. Researchers plan to use it as a testing ground for drug responses and to model neurological disorders without animal testing. A biological system that mimics brain circuits offers a more realistic testbed than current alternatives.

Next Steps

The team aims to improve stability after training and reduce feedback delays. They're also refining the FORCE learning algorithm to make the system more reliable for longer-term use.

This work sits at the intersection of neuroscience and machine learning. For researchers working in either field, the implications are straightforward: living cells may serve as viable alternatives or complements to silicon-based computing for time-dependent problems. See more on AI for Science & Research.

Your membership also unlocks: