AI-Generated Fake News Now Demands Private Verification, Not Just Government Rules

Artificial intelligence can now produce convincing fake videos, images, and audio at scale. Governments alone cannot stop them. The solution requires private-sector verification systems working alongside government oversight, with citizens trained to spot manipulated content.

The problem is accelerating. In May 2023, an AI-generated image of an explosion near the Pentagon spread online and moved financial markets. In January 2024, an AI voice mimicking President Joe Biden reached New Hampshire voters before the Democratic primary. More recently, scammers used AI to create fake real estate agents and fictitious property listings, stealing preliminary deposits from victims.

These are not isolated incidents. Deepfake audio and video crimes are growing in the United States, with criminals impersonating sellers, brokers, and counselors to divert funds or extract personal information. The speed and scale separate today's fake news from earlier fabrications-falsehoods now spread instantly across platforms as mass-produced content.

Government Action Has Real Limits

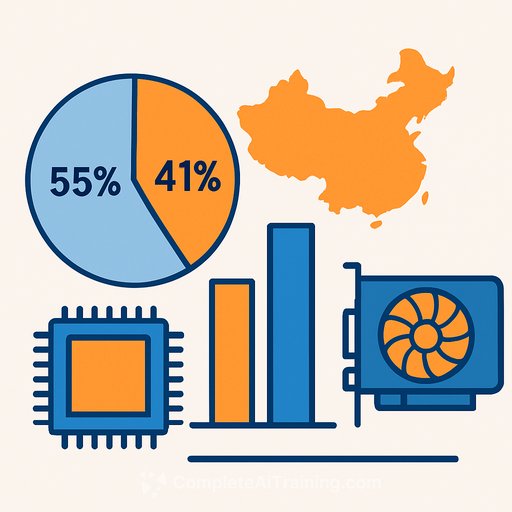

Governments are responding. South Korea introduced an AI Basic Act and mandated watermark labeling on deepfake content. But these measures arrive late, after AI capabilities have already advanced beyond them.

Stronger government oversight carries its own risk. Excessive regulation could suppress legitimate reporting and give officials a tool to brand inconvenient stories as "fake news."

Private Sector Must Lead Verification

The Poynter Institute, a Florida-based nonprofit, has spent years building standards for fact-checking and conducting AI literacy training for journalists and the public. Its research-backed approach has gained influence in American media circles.

South Korea lacks an equivalent. Response has been scattered across individual news organizations, platforms, and self-regulatory bodies. A coordinated private-sector verification system does not exist.

Government institutions and voluntary private-sector verification must advance together. Responsible content production, independent verification, and citizen AI literacy must work in parallel. Only then do AI-generated falsehoods lose their power to manipulate.

For government workers overseeing technology policy, this means supporting private-sector standards while resisting the urge to regulate content directly. The goal is building institutional capacity to verify truth, not centralizing control over what counts as true.

Learn more about generative video capabilities and AI applications in government.

Your membership also unlocks: